I was reading about Kagi, a search engine you pay a monthly subscription to use for. Our world is so fucked up, that explicitly paying with money for a service is the only hope to avoid having your data slurped for profit.

So I asked myself: how do I actually use a search engine?

§ Gathering the data

Open Firefox, press CTRL+H (to open the history), copy the past month data (e.g. November 2023), dump it into a text editor and sort the results:

cat nov-searches.txt | sortI only use DuckDuckGo so I can easily filter out everything else. How many queries? wc says about 300:

cat nov-searches.txt | sort | rg duckduckgo.com | wc

294 294 39068Let's remove duplicated queries (I tend to refine searches by running multiple variants of the query terms), clean up a little bit the urlencoding and also filter out other search parameters not interesting. Let's just isolate the search terms; for example in the following query I want to isolate the part in red:

https://duckduckgo.com/?q=steez+posters&iar=images

cat nov-searches.txt | sort | rg duckduckgo.com \

# isolate only the search terms, discard all the rest

| rg --pcre2 -o -e 'q=(.+?)(?=&)' -r '$1' \

# remove any kind of spaces (urlencoded or not)

| tr '+' ' ' | tr '%20' ' ' | tr -d ' 'Before proceeding, a little pause to disentangle that little ripgrep black magic that I just learned:

--pcre2use the PCRE2 engine, needed for backreferences and look-around-oonly show the matched content-e 'q=(.+?)(?=&)'the regular expression will isolate everything betweenq=and the first&-r '$1'just print the content of the first group capture (in the previous example(.+?)printssteez+posters)

Reminder that Regex 101 is a good resource for experimenting.

Finally, I'd like an idea of how many queries I run, excluding some of the variants (refinements over the same search):

cat nov-searches.txt | sort | rg duckduckgo.com \

| rg --pcre2 -o -e 'q=(.+?)(?=&)' -r '$1' \

| tr '+' ' ' | tr '%20' ' ' | tr -d ' ' |

| sort | uniq -c | sort -rnThe above grouping is a little bit imprecise because I'm not filtering out similar matches. I need a tool to calculate proximity, for example the good old Levenshtein distance. I've tried using agrep but has some limitations. For the moment it will suffice, given the small sample.

§ The stats

The stats for the 3 last months:

| month | total queries | unique queries | repeated queries (avg) |

|---|---|---|---|

| Nov 2023 | 294 | 70 | 4.20 |

| Oct 2023 | 234 | 50 | 4.68 |

| Sep 2023 | 226 | 43 | 5.25 |

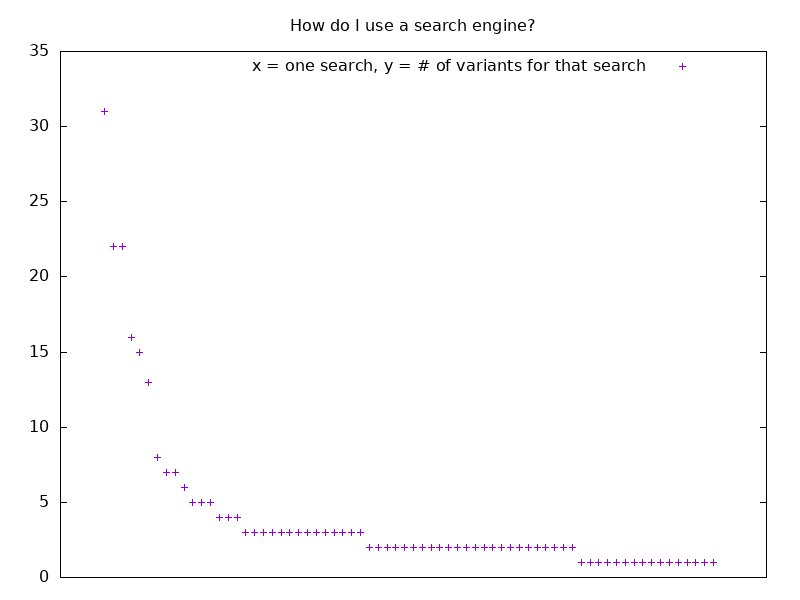

I'll save the output from the previous script into a file input.dat and plot the values using another tool I have a love/hate relationship with: gnuplot.

#!/usr/bin/env gnuplot

set terminal png size 800, 600

set output "searches.png"

set key center top

set style fill solid

set title "How do I use a search engine?"

unset xtics

plot 'input.dat' using 1 t "# of variants" with lpThis script will produce the following image for the November 2023 dataset.

I infer that I might not be very good at searching stuff because in average I repeat about 4 times the same query with slight variations.

Also, for some reason I expected I was using a search engine more. Part of the reason is probably that I often use DDG bangs and some quick custom searches - in these cases I skip DDG and am redirected directly to the website that I want to query.